Avro FormatĪpache Avro" is a serialization and data exchange format that is designed to be compact, efficient, and easy to use. Overall, both ORC" and Parquet" are efficient choices for storing and processing large amounts of data, and the choice between them will depend on the specific needs and requirements of your application. ORC" is generally more limited in terms of the data types and structures that it can support, while Parquet" is more flexible and can store a wider range of data types and structures, including nested data structures. ORC" stores data in a row-based format, with each row in the file representing a record, while Parquet" stores data in a column-based format, with each column in the file representing a field.Īnother difference is the way that they handle data types and structures. One key difference between ORC" and Parquet" is the way that they store data. They are often used in big data" platforms such as Apache Hadoop" and Apache Spark" for storing and processing data. ORC" (Optimized Row Columnar) and Parquet" are both columnar storage formats that are designed to store and process large amounts of data in a highly efficient and performant manner. It also supports nested data structures, which allows it to store complex data in a highly efficient manner. It supports a wide range of data types, including primitive types such as integers, floats, and strings, as well as more complex types such as arrays and maps. It stores data in a columnar fashion, which allows it to efficiently compress and store data, and it also supports efficient data processing, as data can be read and processed in a column-wise fashion rather than row-wise.Īnother advantage of the Parquet" format is that it is highly flexible and can store data of various types and structures. One of the main advantages of the Parquet" format is that it is optimized for reading and writing large amounts of data. It is a popular choice for storing and processing data in big data platforms such as Apache Hadoop and Apache Spark". Parquet" is a columnar storage format for storing large amounts of data in a highly efficient and performant manner. More information you can find under official specification. The columns are divided from one another within each stripe so that the reader can read only the columns that are required. A file’s stripes are self-contained and make up the natural unit of distributed work. Because ORC" files are type-aware, the writer selects the best encoding for the type and creates an internal index when writing the file.īy default", ORC" files are separated into stripes of about 64MB each. The reader can read, decompress, and process only the values required for the current query by storing data in a columnar format. It’s built for huge streaming reads, but it also has built-in functionality for quickly finding required rows. ORC" is a type-aware columnar file format created for Hadoop" workloads that is self-descriptive. Step #1 – Make copy of table but change the “STORED” format.I expected a much higher compression ratio. In this case, I see that 43.0MB are used.

In a second case, I create records so that most of the values of i and j are 0. In this case, I see that 89.4MB are used. In one case, I create records so that all the values of i and j are unique. Val records = // create an RDD of 1M Records

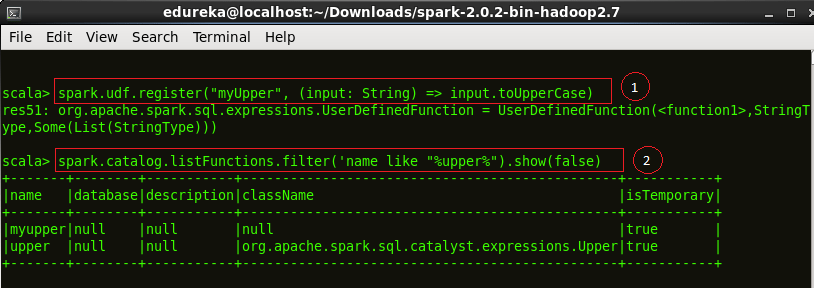

Val conf = new SparkConf().setAppName("Simple Application")Ĭonf.set("", "true") The following are snippets of my test code: case class Record(i: Int, j: Int) However, doing so doesn't seem to make any difference in my memory footprint. I can make sure that Spark is store my my dataset as a compressed, in memory, columnar store?Īt the Spark Summit, someone told me that I have to turn on compression as follows: t("", "true") I've been told that compression of the columnar store is implemented but currently turned off by default. I understand that SparkSQL now supports columnar data stores (I believe via SchemaRDD).

I'd like to user Spark on a sparse dataset.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed